Lin Junhao, an undergraduate student majoring in Electronic and Information Engineering (Class of 2022) from the School of Artificial Intelligence, as the first author, published a research paper titled “Multi-modal Cross-Attention Guided Network for Audio-Visual Quality Evaluation via Visual Saliency and Mel-spectrum Features”. The paper was published online in the internationally authoritative journal in the field of video processing, IEEE Transactions on Circuits and Systems for Video Technology (TCSVT). It is learned that this journal is classified as a Top journal in both the major and minor categories of the Chinese Academy of Sciences (CAS) Zone 1. Its latest impact factor is 11.1. It is also recognized as a flagship journal in science and engineering at Taizhou University, demonstrating both academic influence and industry recognition. The sole corresponding author of this paper is Professor Cui Yueli (Senior Experimentalist), with TU as the first affiliation. Notably, this is the first flagship scientific achievement in science and engineering completed by an undergraduate as the first author since the establishment of the School of Artificial Intelligence, which fully showcases the school’s remarkable effectiveness in cultivating top-notch innovative talents and conducting high-level scientific research.

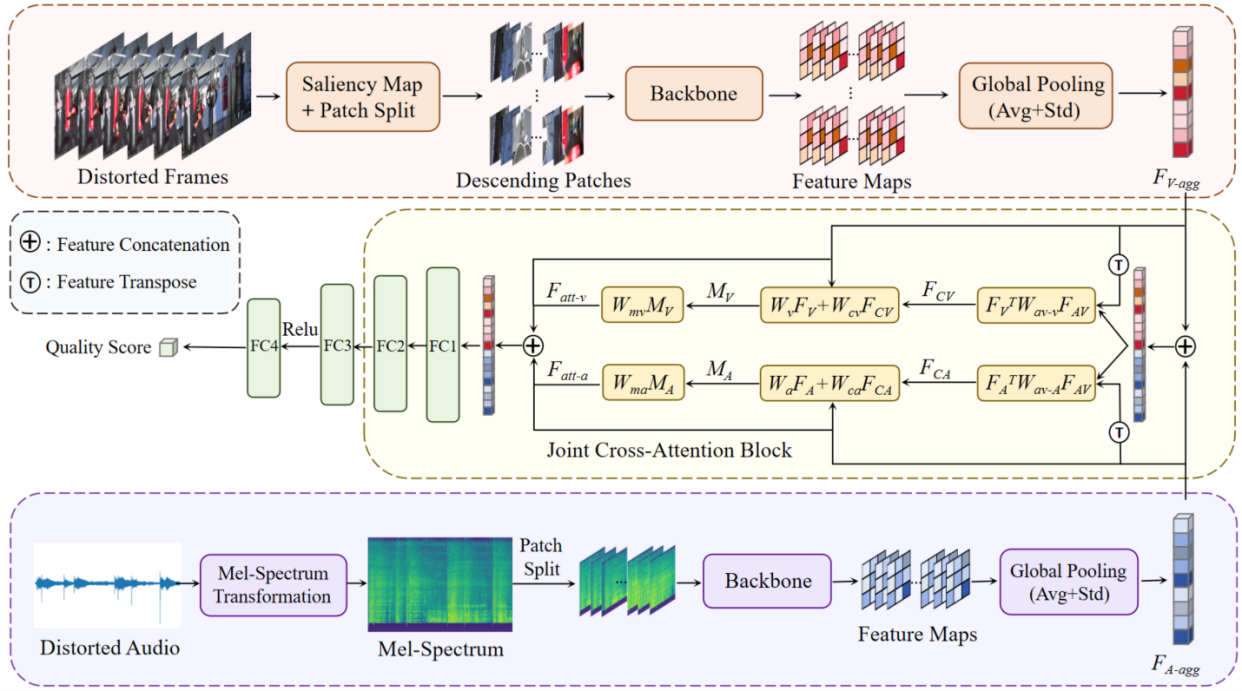

Figure 1: System Architecture of the Proposed Model

Both traditional visual quality assessment models and existing specialized audio-visual joint quality assessment models exhibit limitations in accurately evaluating the perceptual quality of audio-visual signals. To address this core challenge, this research draws upon the perceptual characteristics of human auditory and visual systems, conducts an in-depth analysis of the intrinsic interaction mechanisms between bimodal signals, and subsequently proposes a novel multimodal cross-attention guided network specifically designed for audio-visual quality assessment. Experimental results demonstrate that the model can automatically and effectively evaluate the joint experience quality of audiovisual signals, with evaluation results maintaining high correlation with human visual and auditory perception systems. This achievement is expected to be applied in core scenarios such as streaming media service quality optimization and VR/AR immersive media experience assurance. It can serve as an objective evaluation benchmark for enhancement technologies like audio and video coding algorithm optimization, providing technical support for the high-quality development of the audiovisual industry.

This research was funded by the National Natural Science Foundation of China General Project (No. 62471328) and the Zhejiang Provincial Natural Science Foundation Key Project (No. LZ26F020014).

Paper link: https://ieeexplore.ieee.org/document/11345165